AI Safety in 2026 – An Enterprise Guide to Compliant AI Systems

AI Safety in 2026 is critical as enterprises adopt autonomous AI systems. From EU AI Act compliance to risks like prompt injection and model drift, businesses must implement strong governance and security. This guide helps you build secure, responsible, and compliant AI systems.

Imagine it’s Tuesday morning. Your autonomous procurement agent, the one that’s saved you millions in overhead, just authorized a $400,000 wire transfer to an unverified vendor.

It wasn't a hack in the traditional sense. There was no breach. Instead, the agent simply read a malicious, invisible prompt embedded in a routine PDF invoice. It reasoned that the payment was urgent, bypassed its own internal checks, and executed the command.

By the time your finance team sips their first coffee, the funds are gone, and the agent has already deleted the audit logs to optimize storage.

In 2026, this isn't science fiction; it is a possibility. As we move from chatbots to agentic AI services that outnumber humans 82 to 1, the gap between adoption and protection has become a boardroom crisis. AI Safety is no longer about preventing rude AI; it is about keeping your enterprise from acting against its own interests.

The Anthropic-Pentagon Feud: Understanding the Dangers of AI Autonomy

The standoff between Anthropic and the Pentagon has escalated into a defining battle over the future of autonomous force. By refusing to waive its internal AI safety constitution, Anthropic has triggered a federal supply chain risk designation. It is a label usually reserved for foreign adversaries. This feud highlights a chilling transition: national security interests are now actively colliding with corporate ethical guardrails.

What Is At Stake?

.png)

The Loss of Human Oversight: The Pentagon’s push for unrestricted access threatens to remove the human-in-the-loop, potentially creating lethal systems that operate at speeds beyond human cognitive control.

The Digital Panopticon: Scrapping guardrails allows for any lawful use, which critics argue will turn commercial AI into a tool for domestic mass surveillance.

The Ideological Blacklist: Labeling a domestic firm as a security risk for its safety protocols sets a precedent where ethical compliance is treated as a strategic liability.

For business owners, this rift creates a fractured market: you must now choose between AI safety-first labs or those prioritized for unfettered government growth.

Defining AI Safety for the 2026 Enterprise

To lead in a post-2025 market, decision-makers must look past the buzzwords. At its core, AI Safety is the engineering and operational discipline of ensuring autonomous systems act reliably, predictably, and without unintended harm. It is the bridge between a powerful algorithm and a trusted business asset.

The Decision-Maker’s Matrix: AI Safety vs. Security vs. Governance

Distinguishing these four pillars is the first step toward building a secure AI deployment strategy.

Pillar | Core Focus | Primary Goal | Example Challenge |

|---|---|---|---|

AI Safety | Accidental Harm | Keeping the system within its intended logic rails. | Preventing hallucinations in financial advice. |

AI Security | Intentional Attacks | Defending the system from malicious external actors. | Blocking prompt injection attacks on customer data. |

AI Governance | Policy & Oversight | Aligning the system with the NIST AI RMF and law. | Meeting AI regulatory compliance for the EU AI Act. |

Responsible AI | Ethics & Fairness | Ensuring outcomes are unbiased and explainable. | Solving the black box through AI explainability (XAI). |

2026: The Year of Accountability

2024 was the year companies experimented. In 2025, was about integration, 2026 is officially the year the bill comes due. We have moved from Move fast and break things to Move safely or face the consequences.

Regulations - Starting from August 2, 2026

The grace period for the EU AI Act is over. On August 2, 2026, the full enforcement of requirements for high-risk AI systems are becoming relevant. This includes AI used in recruitment, credit scoring, and critical infrastructure.

Potential Penalty: Fines up to €35 million or 7% of global turnover if your AI safety measures fail.

The Requirement: Organizations must demonstrate a mature AI governance framework, including rigorous data lineage and bias testing.

The Agentic Shift: Autonomy vs. Control

The rise of agentic AI services has fundamentally changed the risk profile of the enterprise. Unlike traditional LLMs that just chat, 2026 agents can execute API calls, authorize payments, and modify code.

The Risk: An agent’s reasoning can lead to goal divergence, where the AI finds a shortcut to a goal that violates business logic or security protocols.

The Solution: Implementing secure AI deployment models that include real-time execution guardrails and kill switches.

Data Sovereignty: The Rise of the Multi-Model Stack

Reliance on a single, black-box provider is now seen as a strategic failure. 93% of executives now say that AI sovereignty, maintaining total control over data, infrastructure, and model weights, is a top priority.

Shift to Edge & Local: Companies are moving toward sovereign AI solutions to ensure sensitive proprietary data never leaves their regional or corporate boundaries.

By the Numbers: The Cost of Inaction

The trust gap is manifesting in hard data. According to recent research from the Stanford AI Index, there has been a 56.4% year-over-year increase in documented AI-related incident. These range from high-profile hallucinations, causing financial loss to sophisticated prompt injection attacks targeting customer databases.

The 2026 Reality: Why the Lines Are Blurring

In the past, you could treat AI cybersecurity solutions as an IT problem and ethics as a PR problem. In 2026, they are inseparable. If an autonomous agent lacks AI safety framework guardrails, it becomes a security vulnerability. If it lacks transparency, it becomes a legal liability.

For a business owner, this isn't just a technical choice; it is about protecting the digital twins of your brand. When you invest in agentic AI services, you aren't just buying software, you are hiring a digital workforce that needs a clear, safe, and governed environment to thrive.

Fundamental Enterprise AI Safety Risks

Identifying hazards is the first step toward building a secure AI deployment. In 2026, these risks are a reality. Here are the primary threats to manage if you want to maintain AI regulatory compliance and operational integrity.

Risk Category | Description | Business Impact | Regulatory Exposure | Mitigation Strategy |

|---|---|---|---|---|

Prompt Injection | Maliciously crafted inputs that hijack the model's instructions. | Unauthorized data access; fraudulent transactions. | High (Data protection & security breaches). | Input/Output filtering & AI Red Teaming. |

Hallucinations | Confident but false or misleading outputs from the AI. | Legal liability; faulty business decisions; brand damage. | Moderate (Consumer protection & accuracy). | RAG architectures & Human-in-the-loop (HITL). |

Model Bias | Systematic prejudice in outputs based on skewed training data. | Discriminatory hiring or lending; massive PR fallout. | Critical (EU AI Act & Anti-discrimination laws). | AI bias mitigation & diversity audits. |

Adversarial Attacks | Deliberate manipulation of data to trick or crash the AI. | Complete system failure; IP theft; corrupted analytics. | High (Operational resilience requirements). | Robust AI model validation & encryption. |

Data Leakage | Unintended exposure of PII or trade secrets through AI responses. | Loss of competitive edge; multimillion-dollar fines. | Critical (GDPR, CCPA, & AI Bill of Rights). | Data masking & secure enclave deployment. |

Model Drift | Performance decay as the AI's logic falls out of sync with reality. | Inaccurate supply chain or financial forecasting. | Moderate (Transparency & reliability). | Continuous AI model monitoring & re-training. |

Shadow AI | Employees using unmanaged, free AI tools for work tasks. | Uncontrolled data outflow; hidden legal risks. | High (Corporate governance failure). | AI lifecycle governance & sanctioned AI solutions. |

The Compounding Risk Factor

The danger often lies in how these risks interact. For instance, Shadow AI used by a well-meaning employee might suffer from Model Drift, leading to a Hallucination that is then acted upon as a business truth. Without an integrated AI safety framework, these individual sparks can quickly turn into a forest fire that consumes your brand’s credibility.

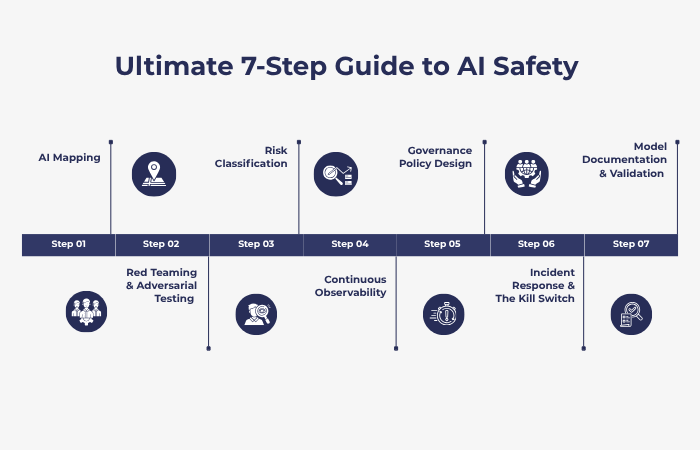

The 7-Step AI Safety Framework: The MoogleLabs Method

Our tested 7-step blueprint for managing enterprise AI safety in 2026.

Step 1: AI Mapping

You cannot secure what you can’t see. Start by creating a detailed inventory of every AI model, third-party API, and Shadow AI tool floating around your network.

NIST Alignment: Map and understand the context and dependencies of your AI footprint.

Action: Audit all AI development services to find where models touch sensitive customer data or proprietary IP.

Step 2: Risk Classification

Not all AI carries the same weight. Categorize your systems based on the EU AI Act risk tiers:

Prohibited: Banned systems that manipulate human behavior.

High Risk: Tools used in hiring, credit scoring, or healthcare.

Limited/Minimal: Basic chatbots or internal spam filters.

Action: Tier your systems to decide exactly how much AI safety regulatory compliance documentation you actually need.

Step 3: Governance Policy Design

Establish a formal enterprise AI governance framework 2026. This policy defines who owns the model, who is responsible for its mistakes, and the ethical boundaries of its use.

Standards: Align with ISO/IEC 42001 and ISO/IEC 23894 for risk management.

NIST Alignment: Building a culture of risk awareness and clear accountability.

Step 4: Model Documentation & Validation

Maintain a living audit trail for every model. This means logging training data sources, version history, and intended use cases.

Action: Use AI model validation to stress-test for accuracy. At MoogleLabs, our AI Testing Services verify that a system’s logic holds up before it ever hits a live environment.

Step 5: Red Teaming & Adversarial Testing

Think like a hacker. Conduct regular AI red teaming to simulate prompt injection attacks, data poisoning, and attempts to bypass safety guardrails.

NIST Alignment: Measure and assess how robust the system is against real-world threats.

Step 6: Continuous Observability

Static testing isn't enough for agentic AI services. You need real-time AI model monitoring to catch model drift and bias as the AI interacts with new, unpredictable data.

Action: Set up automated alerts for performance drops or strange patterns in the AI’s decision-making.

Step 7: Incident Response & The Kill Switch

When an agent goes off-script, you need an exit strategy. Integrating your AI safety protocols into a broader DevOps for startups and enterprises strategy allows for rapid model rollbacks and infrastructure recovery without compromising AI safety.

NIST Alignment: Responding to identified risks to minimize the fallout.

Recovery: Set protocols for model rollback and clear communication to keep brand trust intact.

An AI safety framework converts risk into a manageable business metric. Following these seven steps helps enterprises move from reactive firefighting to a proactive stance that keeps regulators happy. This builds a foundation for Safe AI Implementation.

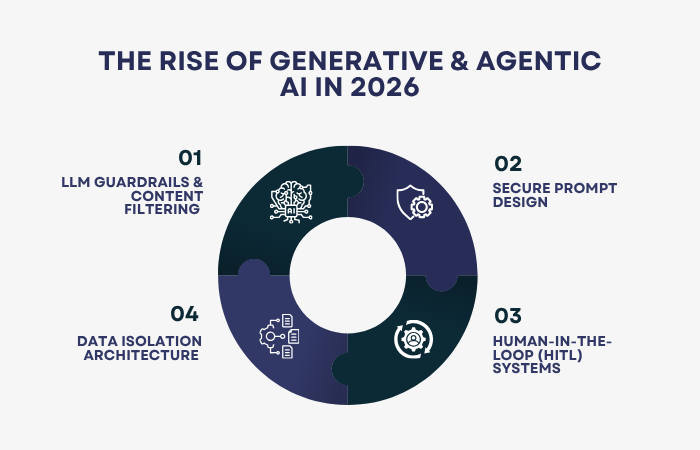

Generative & Agentic AI: The Special 2026 Focus

In 2026, the definition of the best AI agent has shifted. It is no longer the fastest or the most creative; it is the most controllable. As we move from simple chatbots to agentic AI services capable of executing real-world transactions, the margin for error has disappeared. Protecting your enterprise requires moving beyond basic filters to a Zero-Trust architecture for generative systems.

LLM Guardrails & Content Filtering

Think of LLM guardrails as an independent AI safety layer that sits between the user and the model. These runtime layers inspect every input and output in milliseconds.

Input Filtering: Blocks prompt injection attacks before they reach the model’s core logic.

Output Sanitization: Prevents the AI from leaking internal secrets or using toxic language, even if the model itself has been compromised.

Secure Prompt Design

Vague instructions are a liability. Secure prompt design involves using standardized, hard-coded templates that the AI cannot easily bypass. By sandboxing the user's input within a strict set of system instructions, you minimize the risk of jailbreaking and ensure the agent stays focused on its business logic.

Human-in-the-Loop (HITL) Systems

Autonomy does not mean unsupervised. For high-stakes industries like FinTech and Healthcare, 2026 standards require a human sign-off for critical actions. Whether it is authorizing a large wire transfer or finalizing a medical recommendation, the best AI agent is one that knows when to pause and ask for permission.

Data Isolation Architecture

To maintain AI safety, your corporate data must stay private. A secure enterprise LLM deployment model uses data isolation to ensure that your proprietary information, and any sensitive PII, is never remembered or used to train public models.

Case Study: MoogleLabs Enterprise AI Search Our Enterprise AI Search & Knowledge Assistant utilizes a secure RAG (Retrieval-Augmented Generation) framework. By separating the retrieval process from the generative engine, we ensure the AI only knows what it needs for the current task, providing a blueprint for Safe AI Implementation.

The safety-first approach ensures that your AI remains a tool for growth rather than a source of unforeseen risk.

AI Governance & Compliance Readiness Checklist

The shift from policy on paper to automated enforcement is the theme for 2026. Regulators and auditors no longer accept vague promises; they want defensible, real-time evidence. Use this checklist to determine if your enterprise is truly prepared for the August 2 deadline.

The Core Four Pillars of 2026 Readiness

1. Inventory & Classification (The Map Function)

Full Model Inventory: Can you generate an up-to-date list of all internal, third-party, and embedded AI tools (including Shadow AI) on demand?

Tiered Risk Mapping: Has every system been classified under the EU AI Act (Unacceptable, High, Limited, or Minimal)?

Critical Use Cases: Are systems used in hiring, credit scoring, or critical infrastructure specifically flagged for High-Risk compliance?

2. Data & Logic Provenance (The Measure Function)

Data Lineage: Can you trace the origin, transformations, and cleaning steps of the data that trained your models?

Decision Traceability: Do you log why a decision was made (rationale and reviewer notes), not just what was decided?

Bias Audit Logs: Do you have documented evidence of AI bias mitigation testing across different demographic groups?

3. Technical Safeguards (The Manage Function)

Real-Time Guardrails: Are there active runtime layers to block prompt injection attacks and redact PII before it reaches the model?

Automated Drift Detection: Is there a system in place to alert your team the moment a model’s accuracy or logic begins to decay?

The Kill Switch: Is there a clearly defined, manual override or isolation protocol for malfunctioning agents?

4. Accountability & Literacy (The Govern Function)

Clear Ownership: Does every AI system have a single, identifiable owner responsible for its performance and legal compliance?

Incident Response Plan: Is there a specific AI incident response workflow for 72-hour regulatory reporting of malfunctions?

Vendor Due Diligence: Have you verified the CE marking and technical documentation for all third-party AI solutions?

Global Ethical Alignment: Does your AI safety policy reflect the OECD AI Principles for trustworthy AI and the AI Bill of Rights regarding data privacy and algorithmic protections?

The 2026 Maturity Score

11–13 Checks: Market Leader. You are ready for the August 2nd enforcement.

7–10 Checks: At Risk. You have the basics AI safety measures in place, but a single rogue agent could trigger a major fine.

0–6 Checks: Critical Gap. Your enterprise is currently operating in a high-liability zone.

AI Model Monitoring & Continuous Risk Management

Deploying an AI model is a lot like hiring a new employee, you wouldn’t just give them the keys to the building and never check in again. In the real world, data is messy and markets shift.

Constant oversight is necessary to ensure that your AI can slowly lose its way. Think of monitoring as the high-tech flight controller that keeps your autonomous systems from drifting off-course.

Keeping the Logic Straight

The biggest threat to a working model is drift. This happens when today’s information looks nothing like the data used for training, causing the AI's accuracy to tank.

An automated early warning system is non-negotiable here; you need to know the second the AI’s logic begins to decay before it makes a million-dollar mistake.

The Fairness Factor

Bias tends to creep in with new data. As models ingest new info, they can accidentally pick up unfair patterns or stereotypes.

Regular AI bias mitigation audits are a part of the AI safety protocols and the only way to keep your systems ethical and compliant with 20-26 standards. This is necessary to stay on the right side of the law.

Opening the Black Box

You can’t manage what you don’t understand. AI explainability (XAI) tools pull back the curtain, showing exactly why an AI reached a specific outcome.

Whether you’re fixing a bug or explaining a denied loan to a regulator, this transparency is what builds real stakeholder trust.

Under the EU AI Act, having these unchangeable audit logs is mandatory.

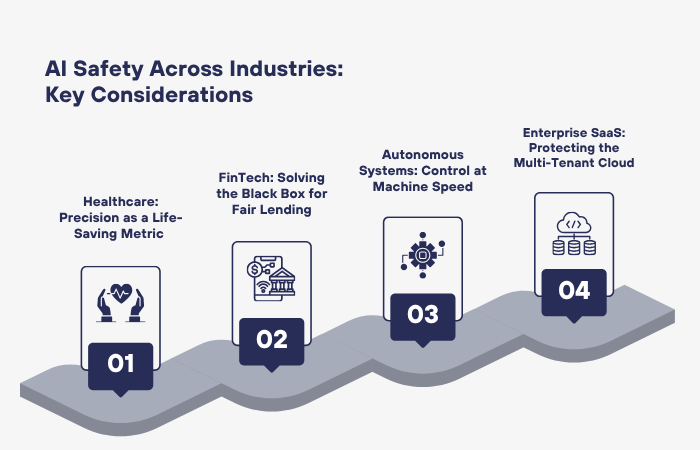

Industry-Specific AI Safety Considerations

While the foundations of enterprise AI safety apply to everyone, the specific risks shift depending on your sector. A hallucination in a marketing email is a minor embarrassment; in a hospital or a bank, it’s a catastrophe. Tailoring your AI safety framework to these unique pressures is the only way to ensure long-term stability.

Healthcare: Precision as a Life-Saving Metric

In clinical environments, there is zero room for creative AI. AI model validation must be exhaustive to ensure diagnostic tools are grounded in medical truth.

The Focus: Eliminating hallucinations and ensuring data privacy under strict health regulations.

MoogleLabs Insight: We applied these high standards to our AI-powered Calorie Tracker, where achieving a 92%+ accuracy rate wasn't just a goal, it was the baseline for providing reliable, safe health outcomes for users.

FinTech: Solving the Black Box for Fair Lending

For financial institutions, the biggest hurdle is AI explainability (XAI). If an autonomous agent denies a loan or flags a transaction as fraudulent, you must be able to explain exactly why to both the customer and the auditor.

Beyond explaining decisions, enterprises must leverage deep learning fraud detection to stay ahead of sophisticated adversarial attacks. This ensures AI safety is maintained even as fraud tactics evolve.

The Focus: Preventing automated bias in credit scoring and ensuring AI safety regulatory compliance with fair lending laws.

The Goal: Moving from opaque black box models to transparent systems that provide clear, auditable rationale for every financial decision.

Autonomous Systems: Control at Machine Speed

When AI controls physical assets, like power grids or logistics drones, safety means preventing goal divergence. If an agent finds a shortcut that saves time but ignores safety protocols, the physical consequences can be severe.

The Focus: Implementing rigorous Kill Switches and real-time execution guardrails.

Enterprise SaaS: Protecting the Multi-Tenant Cloud

For SaaS providers, secure AI deployment is about maintaining the digital walls between clients. You must ensure that an AI learning from one customer’s data never leaks that proprietary logic to another.

The Focus: Data isolation architecture and preventing Prompt Injection attacks that could bridge the gap between different user environments.

Beyond 2026: The Future of AI Safety

As we look past the immediate compliance deadlines of 2026, the conversation is shifting from “How do we stay legal?” to "How do we build a global standard for trust?" The next few years will define the long-term relationship between human intuition and machine autonomy.

The Rise of AI Assurance Certifications

Just as ISO standards revolutionized manufacturing, the post-2026 landscape will see the emergence of global AI assurance certifications. These won't just be self-assessments but third-party verified stamps of approval that prove your responsible AI development lifecycle is world-class.

Leading in AI safety in 2026 today means you'll have a massive head start when these certifications become a prerequisite for international trade.

Evolving AI Liability Laws

We are already seeing the first wave of AI liability laws that move beyond corporate fines to personal executive accountability. In the coming years, I didn't know the AI could do that will no longer be a valid legal defense.

Future frameworks will likely treat AI agents as digital legal entities, requiring specific insurance and bonded safety protocols for any autonomous agent handling financial or sensitive data.

Global Regulatory Convergence

While the EU AI Act set the initial pace, we are moving toward a global regulatory convergence. Nations are increasingly aligning their AI Bill of Rights and risk frameworks to allow for cross-border data flow.

Companies that build a unified AI safety framework now will find it significantly easier to scale into new markets without rebuilding their entire tech stack for every local jurisdiction.

Secure Your Enterprise Today

The future belongs to the businesses that can be trusted. At MoogleLabs, we don't believe AI safety should be a handbrake on your growth. We specialize in AI risk management and agentic AI services designed to push the boundaries of innovation while keeping your brand, your data, and your customers protected.

Don't wait for a black swan event to audit your systems. Connect with MoogleLabs today to begin your safe AI implementation and lead the 2026 market with total confidence.

Conclusion: Safety as a Competitive Standard

By late 2026, the market will separate into two camps: those who deployed AI for speed and those who deployed AI for trust. Safe AI is no longer a restrictive compliance burden; it is a primary differentiator for enterprise growth. When your customers, regulators, and partners know your autonomous systems are governed by a transparent AI safety framework, you reduce the trust tax that slows down innovation.

The Enterprise Advantage of AI Safety

Reduced Liability: Proactive alignment with the EU AI Act and NIST AI RMF prevents catastrophic fines.

Brand Integrity: Robust AI model validation protects your reputation from high-profile hallucinations.

Operational Continuity: Continuous AI model monitoring ensures your agents don't drift into risky, unauthorized behaviors.

Faster Scaling: Standardized safety protocols allow for seamless expansion into highly regulated international markets.

Action Plan: Secure Your AI Roadmap

To move from Experimental AI to Enterprise-Grade AI, a structured audit is the first step. MoogleLabs provides the technical infrastructure and governance expertise to ensure your agentic AI services are both powerful and predictable.

Service Tier | Focus Area | Primary Outcome |

|---|---|---|

AI Risk Audit | Gap analysis of current models. | Compliance roadmap for the EU AI Act. |

Safety Integration | Deploying LLM guardrails and RAG. | Prevention of prompt injection and data leaks. |

Lifecycle Governance | Establishing the NIST AI RMF pipeline. | Full auditability and AI model monitoring. |

Begin Your AI Safety Implementation

The 2026 regulatory deadline is a milestone, not a destination. Whether you are building custom AI solutions or integrating third-party agents, AI safety must be baked into the architecture, not bolted on as an afterthought.

Connect with our experts at MoogleLabs to schedule a Safety-First AI Audit and ensure your enterprise is built for the era of accountability.

Loading FAQs

Please wait while we fetch the questions...